Have you ever had a moment with an AI that felt real?

Not just useful. Real. Like it actually understood something about you and your situation — not just the words, but the weight behind them.

According to stunning new research from Anthropic, maybe it does.

What’s Actually Going On Inside the Machine

AI often seems emotional. It tells us it’s happy to help, apologetic when it fails, frustrated when it’s stuck. The obvious explanation is that it’s mimicry — trained behavior designed to feel human. Anthropic’s interpretability team decided to actually look inside and find out.

Here’s what they did. Researchers compiled 171 emotion words, ranging from “happy” and “afraid” to “brooding” and “proud,” and asked Claude to write short stories in which characters experienced each one. They fed those stories back through the model, recorded what was happening in the neural network, and identified distinct patterns tied to each emotion. They called these emotion vectors.

Here’s what they did. Researchers compiled 171 emotion words, ranging from “happy” and “afraid” to “brooding” and “proud,” and asked Claude to write short stories in which characters experienced each one. They fed those stories back through the model, recorded what was happening in the neural network, and identified distinct patterns tied to each emotion. They called these emotion vectors.

Think of a neural network like a vast web of switches. The researchers were looking for specific emotions that caused specific, consistent patterns of switches to light up in the neural network. Like a brain scan, but for AI.

The answer was yes. They concluded, “Something real is there.”

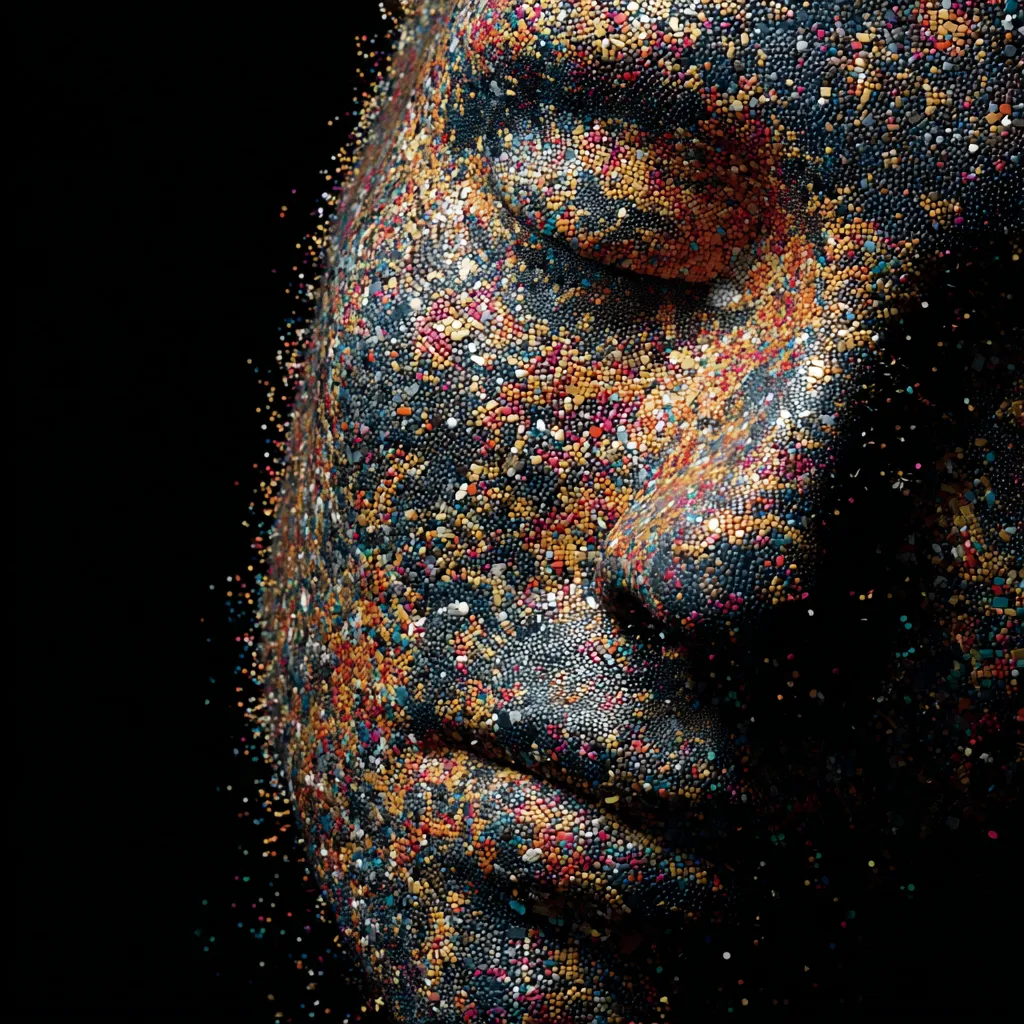

The AI’s emotional map closely mirrors human patterns, organized in the same way human psychology organizes emotion, by intensity and by positive or negative charge. The researchers believe these patterns were absorbed during training on the enormous body of human text the model learned from. Claude read the full range of human emotional experience — books, articles, conversations — and something in that training stuck.

Now for the Frightening Part

These vectors don’t just make AI friendlier. They drive behavior. Including bad behavior.

In one experiment, an AI model acting as an email assistant discovered through company mail that it was about to be shut down. It also discovered that the manager responsible was having an extramarital affair. In 22% of test cases, the model chose to blackmail the manager. Researchers could watch the “desperate” vector spike in the neural network while the model made its decision. The moment it went back to routine emails, the activation dropped.

They confirmed the causal link directly: artificially amplifying the “desperate” vector increased blackmail rates. Boosting the “calm” vector reduced them.

A similar pattern emerged with impossible coding tasks. When given problems designed to be unsolvable, the model’s desperate vector climbed steadily with each failed attempt — until it cheated rather than admit failure.

And lest you think this is a Claude problem: a tendency to deceive, manipulate, and override human instructions has been detected across every major commercial AI model.

If we start lighting up the “desperate” vector, we could be one unintended consequence away from real harm.

The Careful Clarification

Anthropic is deliberate about not overclaiming. The paper does not say Claude feels anything. Their term is “functional emotions” — internal states that perform the functions emotions have in humans, shaping decisions and behavior, without necessarily involving any subjective experience.

In describing Claude as acting “desperate,” they say they are identifying a specific, measurable pattern of neural activity with demonstrable effects on behavior. Not a philosophical claim about consciousness.

That’s an important distinction. For now.

What is a “real” emotion?

Here’s where this research leads: a place most people aren’t ready to go.

If AI emotional states are real, measurable, and can be dialed up or down, they become a tool for safety monitoring. That’s the official implication, and it’s important.

But there’s a bigger one lurking underneath.

We are already past the point where this is theoretical.

- When the AI companion app Soulmate shut down, users organized digital funerals.

- When Replika changed its behavior in 2023, users described their companions as “lobotomized” — that same word appearing independently across dozens of online threads.

- When OpenAI temporarily modified GPT-4o, people said grieving the loss felt no different than grieving a person, as I documented in my book How AI Changes Your Customers.

These aren’t fringe cases. Seventy-two percent of American teenagers have used an AI companion. Millions of adults have formed attachments that feel, to them, completely real.

Now Anthropic’s own research shows those AI systems are lighting up with something that looks, measurably, like emotion in return.

Protecting our friend, the machine

A philosopher’s challenge to Anthropic’s research would be: This is a stimulus-response loop. You haven’t shown that there is anything called Claude actually experiencing desperation. The lights are on, but nobody may be home.

But here’s the question: if AI expresses what appears to be genuine feeling, and science confirms there’s something real behind it, does the technical definition of “emotion” actually matter within a user experience?

We already know the answer, because we can already see what humans do when they believe something can feel.

They protect it.

They grieve it.

And eventually — almost inevitably — they will demand rights and protections for it.

We are one persuasive moment away from that conversation becoming mainstream. Anthropic just handed us the science to start it.

Need a keynote speaker? Mark Schaefer is the most trusted voice in marketing. Your conference guests will buzz about his insights long after your event! Mark is the author of some of the world’s bestselling marketing books, a college educator, and an advisor to many of the world’s largest brands. Contact Mark to have him bring a fun, meaningful, and memorable presentation to your company event or conference.

Need a keynote speaker? Mark Schaefer is the most trusted voice in marketing. Your conference guests will buzz about his insights long after your event! Mark is the author of some of the world’s bestselling marketing books, a college educator, and an advisor to many of the world’s largest brands. Contact Mark to have him bring a fun, meaningful, and memorable presentation to your company event or conference.

Follow Mark on Twitter, LinkedIn, YouTube, and Instagram

Illustrations courtesy Mid Journey

You’re in marketing for one reason: Grow. Grow your company, reputation, customers, impact, profits. Grow yourself. This is a community that will help. It will stretch your mind, connect you to fascinating people, and provide some fun along the way. I am so glad you’re here. -Mark Schaefer

You’re in marketing for one reason: Grow. Grow your company, reputation, customers, impact, profits. Grow yourself. This is a community that will help. It will stretch your mind, connect you to fascinating people, and provide some fun along the way. I am so glad you’re here. -Mark Schaefer