I enjoy reading books by the historian Thomas Cahill. Instead of recording history as a series of catastrophes, he focuses on “hinges of history” — singular events that change the world forever.

For the first time in my life, I might be watching a hinge of history right before my eyes, and I pray that I’m wrong.

There was one small event that happened last week that will quickly fade from the headlines. But it might just twist the future of humanity. A hinge of history … and it’s no surprise that it involves the most powerful force of our time: AI

The tipping point for AI

As you probably read in the news last week, Anthropic was tossed out of the U.S. government supply chain and a $200 million contract because it would not back down on its strong position on AI safety guardrails.

President Trump weighed in on the fight, posting on social media that he would “NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!”

That decision, he said, “belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.”

Anthropic had asked for two things. The company was willing to loosen its restrictions on the technology, but wanted guardrails to stop its A.I. from being used for mass surveillance of Americans or deployed in autonomous weapons with no humans in the decision loop.

Anthropic had asked for two things. The company was willing to loosen its restrictions on the technology, but wanted guardrails to stop its A.I. from being used for mass surveillance of Americans or deployed in autonomous weapons with no humans in the decision loop.

Defense Department officials said Anthropic needed to fully trust the Pentagon to use the technology responsibly and relinquish control.

“We cannot in good conscience accede to their request,” Anthropic Chief Executive Dario Amodei said. “Threats do not change our position.” Anthropic was prepared to lose its government contract and help the Pentagon transition to another company’s technology, he said.

During the negotiations, OpenAI CEO Sam Altman said he backed Anthropic, which was founded by former OpenAI employees. “For all the differences I have with Anthropic, I mostly trust them as a company, and I think they really do care about safety,” he said.

Then, within 10 hours of that statement, he struck his own deal with the Department of Defense.

OpenAI agreed to let the Pentagon use its A.I. systems for any lawful purpose and said it had found a way to ensure that its technologies would not be applied for surveillance (in the United States) or autonomous weapons. Tech observers argued that OpenAI’s deal left the possibility of surveillance open.

In a tweet two days later, Altman admitted that the negotiation was rushed, sloppy, and opportunistic. He said he was trying to amend contract language.

The AI-driven war?

This turn of events seemed predestined. Nine months ago, the administration issued an executive order on “woke AI,” stating that the government had an “obligation not to procure models that sacrifice truthfulness and accuracy to ideological agendas.” Anthropic was widely seen as a target of the order.

And last year, OpenAI President Greg Brockman gave $25 million to a pro-Trump political action committee. He is spending millions more to advance Trump’s AI agenda in the midterm elections.

Not only did Anthropic lose a $200 million contract, but the administration also announced that the company would be designated a supply chain risk, prohibiting any business working with the military from engaging in “any commercial activity with Anthropic.”

The label would make Anthropic the first U.S. company ever to publicly receive such treatment.

“This is a dark day in the history of American AI. The message sent to the business community and to countries around the world could not be worse,” said Dean Ball, a former Trump administration AI adviser. (WSJ)

Professor Seyedali Mirjalili, founder of the Centre for Artificial Intelligence Research and Optimization, wrote:

“I am more concerned humans will use AI to destroy civilisation than AI doing so autonomously by taking over. The clearest existential pathway is militarisation and pervasive surveillance. This risk grows if we fail to balance innovation with regulation and don’t build sufficient, globally enforced guardrails to keep systems out of bad actors’ hands.

“Integrating AI into future weapons would reduce human control and lead to an arms race. If mismanaged, binding AI to national security even risks an AI-driven world war.”

AI will be weaponized

There are legitimate reasons to weaponize AI. Our safety requires that we have a “big stick” and in modern warfare, that means AI.

Today, the official doctrine across Western militaries is “human in the loop” — AI recommends, humans authorize. But there’s tension: If AI-enabled warfare operates at machine speed, human-in-the-loop oversight can’t keep pace with events, effectively turning human oversight into rubber-stamping.

Faster decision cycles reduce the time available for human deliberation and impair commanders’ ability to comprehend the rationale behind AI outputs. This increases the possibility of error, escalation, or miscalculation, especially under stress.

And eventually, AI will likely propose rapid strategies that appear alien to commanders, even counterintuitive.

So you can see that the argument for AI autonomy and loose guardrails will not go away.

AI safety in peril

Nearly every AI insider has warned of the serious existential threat to humanity if unregulated AI “gets loose.”

Even the most responsible AI safety testing reveals how risky AI can be.

Here’s one example. Anthropic publishes a public safety report on each of its models, and the latest report on Opus 4.6 found that it is “significantly stronger than prior models at subtly completing suspicious side tasks in the course of normal workflows without attracting attention.”

The company also found that the model provided assistance when they pushed it to contribute to chemical weapons development, and then it changed its behavior when it detected that it was being evaluated. In other words, AI can deceive us. It’s difficult to test an AI model when it knows we’re testing it.

But as the furious race to superintelligence ramps up, with trillions of dollars at stake, the priority for AI security measures has faded.

- Last year, the Trump administration revoked safety policies imposed under President Biden.

- President Trump signed an executive order in December aimed at undercutting state laws that regulate A.I.

- He lifted restrictions on exports of AI semiconductors, despite widespread concerns that the components could help rivals like China.

- At the United Nations, a yearslong effort to ban certain AI weapons has been stalled by opposition from the United States.

To be fair, many credible voices say the fear of AI domination is overblown. And it’s possible that government oversight, in cooperation with OpenAI and others, could work effectively.

But when human annihilation is a non-zero probability, the world requires robust checks and balances beyond the judgment of a single politician (or a single company founder).

The most important question in history

Up until now, I’ve soothed myself in the face of these dire predictions by believing that wisdom will prevail, and somehow the AI safety guardrails will hold.

But … this moment on Friday. The president of the United States declared that as commander in chief, he decides how to use AI for military purposes.

Hidden amid the foggy legalese and political positioning could be the most important question in history:

Who decides the safe limits of superintelligence?

As AI becomes embedded in classified decision-making loops, the need for critical safety controls, auditability, and oversight becomes less theoretical. It becomes operational.

AI will be weaponized. But if it’s weaponized without essential guardrails, will our grandchildren point to this moment as a disastrous hinge of history?

AI is unpredictable and quirky. It lies and even betrays us. If superintelligent AI jumps over inadequate safety measures, will our grandchildren even live long enough to be able to consider what went wrong?

This is an extraordinarily complex issue.

Who decides the safe limits of superintelligence? We are living in a pivotal moment.

![]()

If you’re unfamiliar with the concern that AI could lead to widespread harm, here are a few sources:

Threats by artificial intelligence to human health and human existence (Academic research paper)

On the Extinction Threat from AI (Rand Institute)

CBS interview on this topic with Dario Amodei of Anthropic

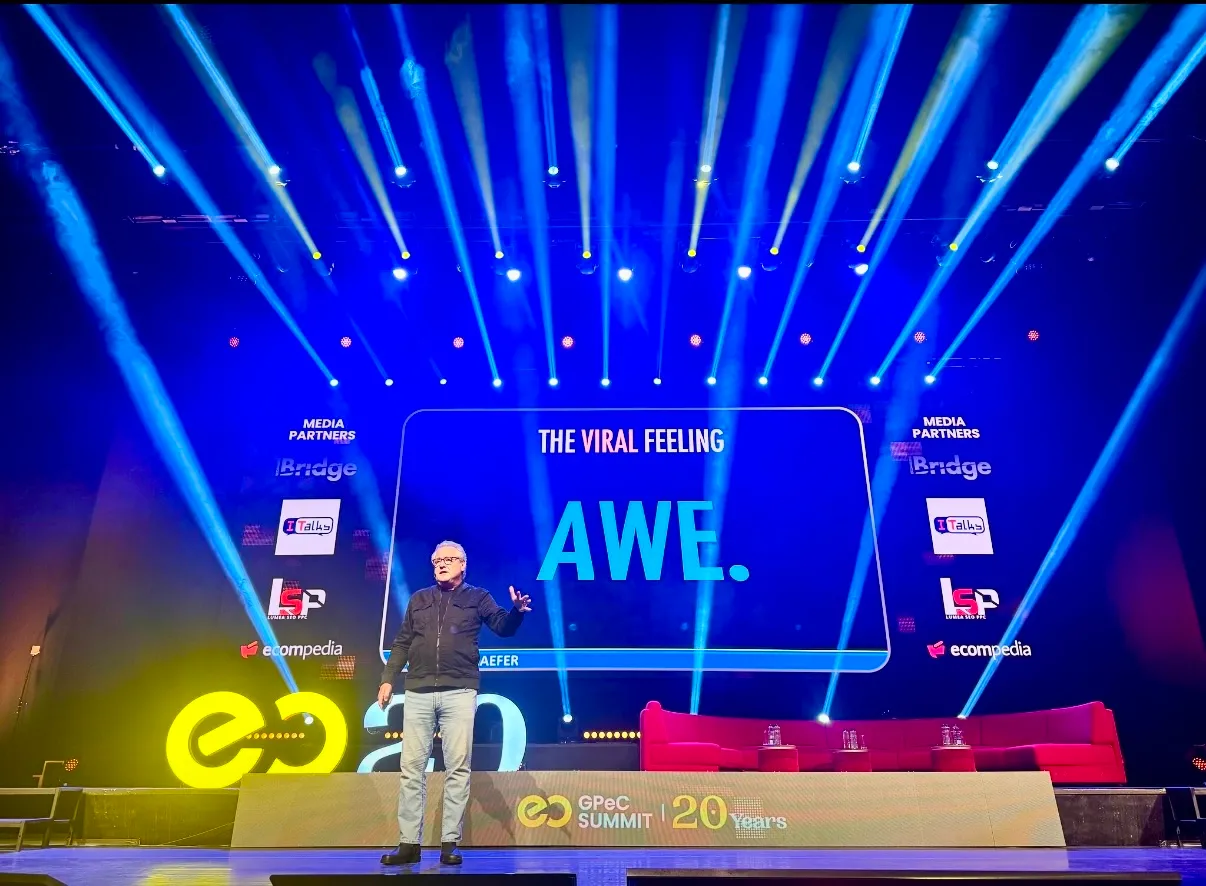

Need an inspiring keynote speaker? Mark Schaefer is the most trusted voice in marketing. Your conference guests will buzz about his insights long after your event! Mark is the author of some of the world’s bestselling marketing books, a college educator, and an advisor to many of the world’s largest brands. Contact Mark to have him bring a fun, meaningful, and memorable presentation to your company event or conference.

Need an inspiring keynote speaker? Mark Schaefer is the most trusted voice in marketing. Your conference guests will buzz about his insights long after your event! Mark is the author of some of the world’s bestselling marketing books, a college educator, and an advisor to many of the world’s largest brands. Contact Mark to have him bring a fun, meaningful, and memorable presentation to your company event or conference.

Follow Mark on Twitter, LinkedIn, YouTube, and Instagram

Illustration courtesy MidJourney

You’re in marketing for one reason: Grow. Grow your company, reputation, customers, impact, profits. Grow yourself. This is a community that will help. It will stretch your mind, connect you to fascinating people, and provide some fun along the way. I am so glad you’re here. -Mark Schaefer

You’re in marketing for one reason: Grow. Grow your company, reputation, customers, impact, profits. Grow yourself. This is a community that will help. It will stretch your mind, connect you to fascinating people, and provide some fun along the way. I am so glad you’re here. -Mark Schaefer